Why Data Quality Determines 80% of AI Success

The success of AI projects depends less on models and more on data quality. This article explains why data quality determines up to 80% of AI success, common pitfalls, and how to build reliable data foundations.

Why Data Quality Determines 80% of AI Success

One of the biggest misconceptions in AI projects is believing that success comes from choosing the right model. In reality, most of the success depends on data quality.

Data Wins, Not Models

Even the most advanced models fail when trained on poor data.

Incomplete, inconsistent, or inaccurate data leads to:

- incorrect predictions

- bias in outcomes

- unreliable systems

That’s why data should be the primary focus in AI projects.

Key Dimensions of Data Quality

Data quality is defined by several factors:

- Accuracy: How well the data reflects reality

- Completeness: Whether data is missing

- Consistency: Alignment across sources

- Timeliness: How up-to-date the data is

Weakness in any of these areas impacts model performance.

Common Mistakes

Many teams make critical mistakes:

- ignoring data cleaning

- poorly designed ETL pipelines

- lack of monitoring

- trusting data without validation

These lead to models learning the wrong patterns.

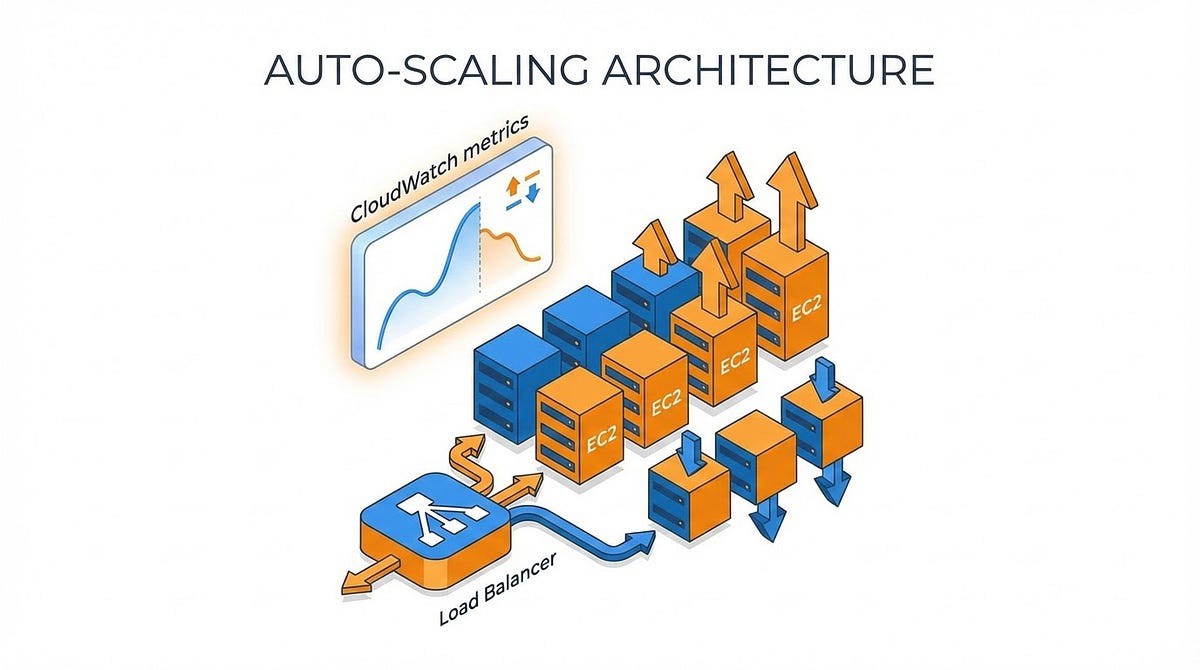

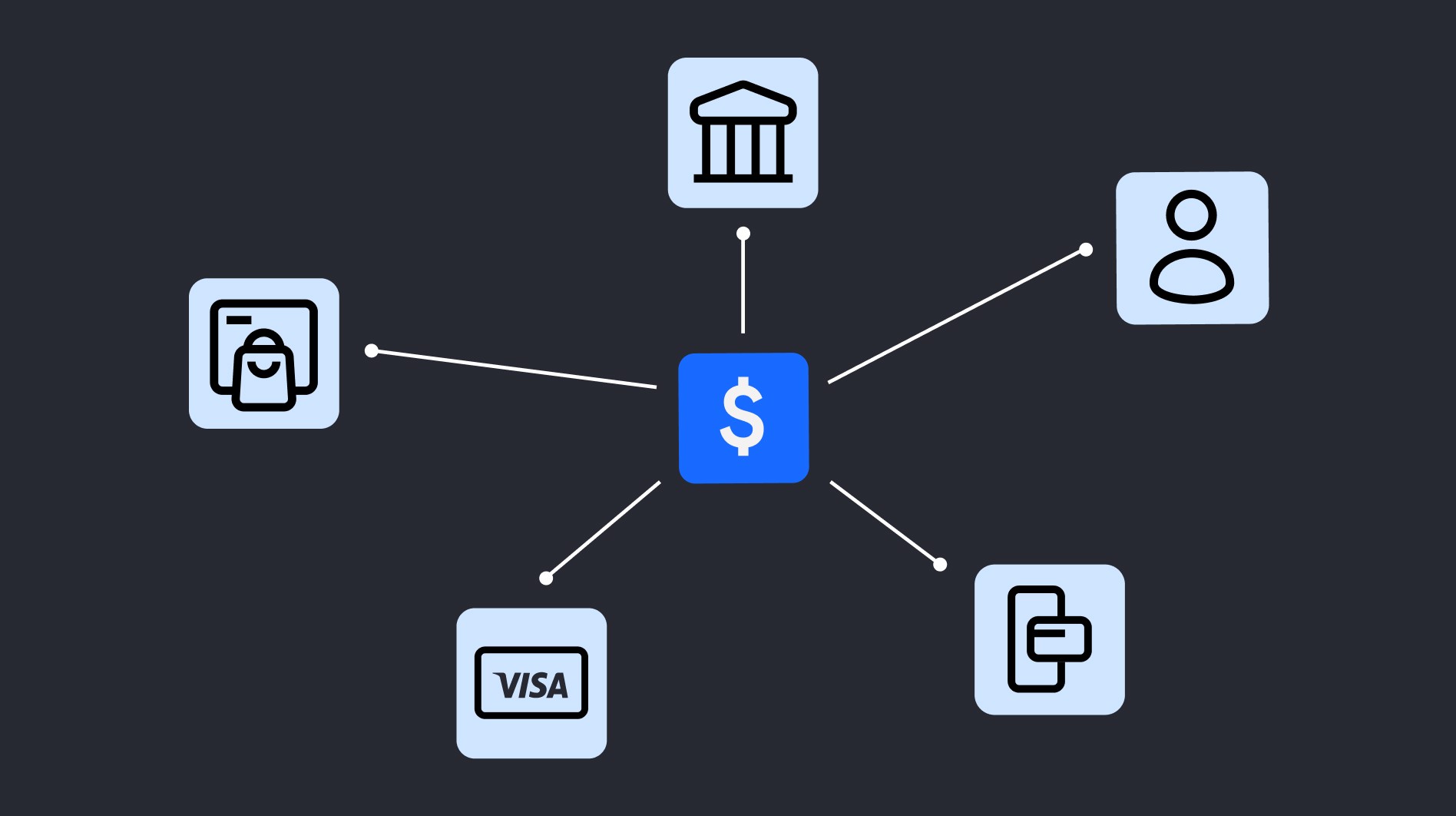

The Role of Data Platforms

Successful AI systems are built on strong data foundations:

- ETL pipelines

- data warehouses and lakes

- data quality checks

Without these, AI becomes guesswork.

Data Discipline in the AI Era

In AI-first systems, data quality is not optional:

- models continuously learn from data

- poor data creates systemic errors

- errors scale with the system

Conclusion

In AI, data determines success—not models. Investing in data quality is investing in reliable and scalable AI systems.